Building on Salesforce’s Boring Foundation (We Mean That as a Compliment)

The platform underneath the agent matters more than the agent itself

Part 4 of The Accidental Agentic Enterprise series. [Start with Part 1 here.]

Author: John Pettifor

Nobody gets excited about metadata.

Nobody gets excited about metadata.

Nobody has ever walked out of a conference keynote buzzing about field-level security or audit trail retention policies. No LinkedIn post has gone viral over a well-implemented permission set. At every AI event I attend, the energy is in the demos: the agent that writes code, the copilot that drafts the email, the model that reasons through a problem.

But here’s what I keep coming back to as we build Delivery Intelligence: the most important technology decision in our AI strategy isn’t which model we use. It isn’t which agents we’ve built. It’s the platform they run on. And the reason that platform works isn’t because of anything flashy. Salesforce is, at its core, a beautifully boring piece of enterprise software.

I mean that as the highest possible compliment.

A Confession About the SaaSpocalypse

I need to be honest. We didn’t choose Salesforce for our AI strategy. We’re a Salesforce partner. Salesforce is what we do. When we set out to build Delivery Intelligence, the question was never “which platform should we build on?” It was “can the platform we’re already on support what we need?”

For about six months, I wasn’t sure the answer was yes.

Late 2024 into early 2025, I was genuinely concerned about Salesforce’s ability to compete in the AI landscape. The SaaSpocalypse narrative was everywhere: if AI can generate entire applications and agents can automate workflows end-to-end, what’s the point of a structured SaaS platform? Why pay for Salesforce when you could just build it?

I heard that argument from smart people. Seriously entertained it. And I now think it’s almost entirely wrong.

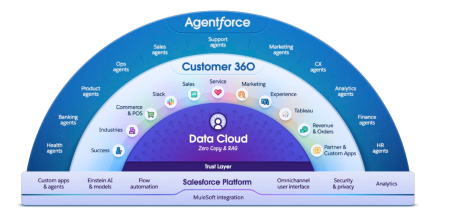

After months of building agentic AI on top of Salesforce, I believe the SaaSpocalypse misreads what these platforms provide. Yes, how they’re used will change drastically. Interfaces will evolve. Workflows will be reimagined. But the structured data models, permission frameworks, audit infrastructure, and decades of domain-specific business logic? That core is what enables AI in the enterprise. It doesn’t compete with AI. It’s the foundation AI requires.

I’m more bullish on Salesforce now than I was before we started building.

The “Boring” Salesforce Platform, and Why We Love It So Much

When I say boring, I’m talking about things that have been true about Salesforce for years. These aren’t new AI features. They’re foundational architecture decisions made long before anyone was talking about agents.

-

Metadata-driven

Every object, field, and relationship is described in metadata the platform understands natively. You don’t have to teach an agent what a Contact is or how Opportunities relate to Accounts. The platform has known that for twenty years.

-

Permission-governed

Role hierarchies, profiles, permission sets, field-level security, org-wide defaults, sharing rules. A deeply mature system for controlling who sees what and who does what. This isn’t bolted on. It’s baked into every data access decision.

-

Natively audited

Who changed what, when, from what value to what value. Setup audit trail for configuration changes. Login history. Event monitoring for enterprise editions. The receipts exist by default.

-

Schema-enforced

You can’t throw a JSON blob into Salesforce and hope for the best. Data has types, validation rules, required fields, relationships with referential integrity.

None of this trends on social media. All of it is what makes agentic AI trustworthy at scale.

Platform Governance Should Be Your First AI Question

Many companies are getting one thing wrong about AI right now: they’re spending all their evaluation energy on the agent and almost none on the platform the agent operates within.

Many companies are getting one thing wrong about AI right now: they’re spending all their evaluation energy on the agent and almost none on the platform the agent operates within.

The questions I hear most: Which model should we use? What’s the best agent framework? Should we go with Copilot or Agentforce? Those aren’t bad questions, but they’re the wrong first questions.

The first questions should be:

- When this agent reads and writes data in our business systems, what governs that access?

- What stops it from seeing records it shouldn’t?

- What logs the decisions it makes?

- What ensures the data it reads is structured, clean, and contextually accurate?

If you don’t have answers to those, the model doesn’t matter. You’ve built a capable agent on a foundation that can’t be trusted.

In Practice, Governance Works Directly Inside Delivery Intelligence

Let me make this concrete with what we’ve built.

Our Delivery Intelligence agents operate across multiple data sources: Salesforce CRM for pipeline and customer data, Certinia PSA for project financials and resource management, Azure DevOps for code and work items, SharePoint for delivery knowledge. Every one of those connections runs through MCP servers (which we covered in Post 3) and is orchestrated through MuleSoft Agent Fabric.

But the governance doesn’t live in the orchestration layer alone. It lives in Salesforce itself.

When we deliver a Salesforce project, our customers’ data flows into our environment. Their project requirements, their business processes, their technical specifications, their timelines and budgets. That data feeds our Delivery Intelligence agents. The Requirements Agent reasons over it. The Workshop Planning Agent structures it. The estimation tools reference it. Our customers’ proprietary business information is being shared with us and consumed by AI agents that help us deliver their project faster and better.

That Trust Relationship Only Works Because of What’s Underneath

When our Requirements Agent generates functional requirements for a client project, here’s what’s happening: the agent accesses project data through the Certinia MCP server, but it only sees projects that the requesting user has permission to access. It reads from delivery knowledge in SharePoint, but only the knowledge collections that have been approved and curated for agent consumption. It writes outputs back to a structured data store in Salesforce, where those outputs inherit the sharing model of the parent project. Every action is logged. Every data access is governed by the same permission model that governs human users.

We didn’t build any of that governance. We inherited it. It was already there because Salesforce has been enforcing those patterns for years. When a customer asks us how their data is protected within our AI-augmented delivery process, the answer isn’t “we built a custom security layer.” The answer is “your data is governed by the same Salesforce permission model, audit framework, and access controls that enterprises have been trusting for two decades.”

We didn’t build any of that governance. We inherited it. It was already there because Salesforce has been enforcing those patterns for years. When a customer asks us how their data is protected within our AI-augmented delivery process, the answer isn’t “we built a custom security layer.” The answer is “your data is governed by the same Salesforce permission model, audit framework, and access controls that enterprises have been trusting for two decades.”

Compare that to agents built on top of unstructured data with improvised tooling: an LLM pointed at a file share or data lake with inconsistent permissions. The agent reads everything it can reach.

- No metadata to flag what’s sensitive

- No permission model mapped to business roles

- No structured framework for logging AI actions

- No schema validation to catch hallucinated output before it propagates.

That’s not a theoretical risk.

The Vibe-Coding Nightmare (A Real Conversation)

In Post 1, I wrote a line that got more reaction than almost anything else in the series: the thought of someone vibe-coding their way to a financial management system that competes with Certinia gives us nightmares.

I want to unpack that with a real conversation I had recently.

I was speaking with a CEO whose company is in proprietary education and certification. Their product is the knowledge their subject matter experts have accumulated over decades, packaged into courses and credentials that professionals pay for. His company had just attended an AI bootcamp, and they came back with this advice: “You can just vibe-code an LMS now. It would only take a few months, then you just need to maintain it.”

On the surface, that sounds reasonable. AI-assisted development is powerful. You could scaffold the application, build the UI, wire up the database, and have something that looks like a functioning LMS in a few months.

This Advice Misses the Full Picture

You’d need the vision and creativity to build genuinely good software, not just functional software. You’d have to keep updating it: browser changes, security patches, accessibility standards, mobile platforms, certification requirements. You’d need payment processing, credential verification, reporting, compliance tracking, authentication, content delivery at scale.

And the core problem: this company’s product isn’t software. It’s their education and certification IP. The knowledge that lives in their subject matter experts. The credibility of their credentials in the market. Every hour spent maintaining a custom LMS is an hour not spent on what actually makes them money.

For a company whose product is software, vibe coding is a genuine force multiplier. For everyone else, using a mature, affordable, safe, and scalable platform to deliver their product is almost always smarter. The platform handles the boring infrastructure so they can focus on differentiation.

The same logic applies at enterprise scale. Certinia exists because financial management at scale requires years of domain expertise, regulatory compliance, audit requirements, multi-currency support, revenue recognition rules, and the accumulated edge cases of thousands of enterprise deployments. That knowledge is encoded in the platform. You can’t vibe-code your way to ASC 606 compliance. MuleSoft exists because integration governance is genuinely hard. Salesforce and Agentforce exist because CRM at enterprise scale requires structured data models, permission frameworks, and workflow engines that have been refined over two decades.

Our agents don’t replicate what Salesforce and Certinia already do well. They make those platforms intelligent, by adding AI on top of them.

Shifting Your AI Risk Profile

This is now moving from a technology conversation to a business-focus one.

When you build agents on top of a governed, structured platform, the risk profile of those agents fundamentally changes. Your CISO doesn’t have to evaluate a new security model because the agent inherits the security model that’s already been approved. The compliance team doesn’t have to design new audit processes because the agent’s actions are logged in the same audit framework they already monitor. Your data governance team doesn’t have to invent new access controls because the agent respects the same permission boundaries as human users.

This is why we’re able to move at the pace we’re moving. Our biweekly release cadence isn’t possible because we’re cutting corners on governance. It’s possible because we’re not building governance from scratch for each new capability. Every new agent we add inherits the full trust layer of the Salesforce platform.

For our customers, this matters in two ways. First, every project they engage us on benefits from an AI-augmented delivery process where their data is governed by enterprise-grade infrastructure, not stitched-together tooling. Second, when those same customers are ready to build their own agentic capabilities on Salesforce, the same foundational advantages apply. The governance framework they already have in place — the permissions, the audit trails, the structured data models — becomes the trust layer for their own agents. They don’t have to start from scratch. The foundation is already there.

Try doing that with agents built on top of a standalone vector database and a direct API connection to an LLM. You’ll spend more time building the governance layer than you spend building the agent.

You Need to Know This If You’re Evaluating AI Strategy

If you’re a business leader evaluating where to invest in agentic AI, here’s the honest assessment:

The model will change. The agent frameworks will evolve. The capabilities will get better. All of that is in flux and will remain in flux for years. Picking the “right” model today is a bet that will need to be revisited.

The platform underneath, though, is the durable decision. If your structured data, your permissions, your audit capabilities, and your governance framework are already mature, then every AI capability you add benefits from that foundation. If they’re not, then every AI capability you add is a new governance problem you have to solve from scratch.

Salesforce isn’t the only platform with these characteristics. But it’s the one we know deeply, and it’s the one where we’ve proven that the foundation is strong enough to support what we’re building. Twenty years of metadata, permissions, and structured data didn’t seem exciting when they were being built. Now they’re the reason we can deploy agentic AI with confidence.

The boring stuff was always the hard stuff. It just took AI to make that obvious.

What Shifted

Six months ago I was genuinely worried about whether Salesforce as a platform had a future in an AI-driven world. Building on it every day has reversed that completely. The SaaSpocalypse narrative gets the direction of the threat exactly backwards. AI doesn’t make these platforms obsolete. It makes the governance, structure, and domain knowledge they’ve accumulated over decades more valuable than ever. The companies that will struggle aren’t the ones running enterprise SaaS. They’re the ones trying to build AI without it.

What’s Next

Post 5 digs into something most companies aren’t thinking about yet: efficiency. Not just “does the agent work?” but “how much compute, how many tokens, and how much energy is it consuming to get the answer?” The architecture decisions that make agents efficient aren’t obvious, and the difference between a well-scoped query and a lazy one is enormous. We’ll share real numbers from our own build.

Where We Are Now

Delivery Intelligence is live and shipping biweekly releases. The Workshop Planning Agent and Requirements Agent are in production on active projects. Build Agent is in development. The full Delivery Intelligence stack is targeted for completion by summer 2026. Want to see it in action? Talk to our team.

Diabsolut is a Salesforce consulting partner building the agentic enterprise in the open. Follow the series to see what’s working, what we’re learning, and what’s coming next.

Read more in our Agentic Enterprise Series

- Part 1: Diabsolut: The Accidental Agentic Enterprise

- Part 2: Agents Don’t Run the Show — People Do

- Part 3: Zero to Live in a Week: What That Requires

- Part 4: Building on Salesforce’s Boring Foundation (We Mean That as a Compliment)

Search

Trending Topics

- Building on Salesforce’s Boring Foundation (We Mean That as a Compliment)

- Zero to Live in a Week: What That Actually Requires

- Agents Don’t Run the Show — People Do

- Getting Leadership Buy-In for AI (Without Pitching “AI”)

- Diabsolut: The Accidental Agentic Enterprise

- Diabsolut Named Certinia Partner of the Year 2025

- Agentforce Implementation Starts With Data Readiness

- Maturity Models: A Guide for Scalable Implementation

- 5 Benefits of Predictive Analytics in Field Service You Can’t Ignore

- PS + CS on Salesforce: Turning Unified Services Data into a Strategic Advantage Across the Customer Journey