Agents Don’t Run the Show — People Do

How we think about human-in-the-loop design, AI Slop, and the skill nobody talks about

Part 2 of The Accidental Agentic Enterprise series. [Start with Part 1 here.]

Author: John Pettifor

We know — you probably expected the next post to be all about the technology. The stack, the architecture, the agents themselves. That’s coming. But we’d be doing you a disservice if we got there before we talked about the most important part of building an agentic enterprise: the humans inside it.

There’s a version of the agentic enterprise that sounds great on paper: agents handling everything end-to-end, humans reviewing outcomes rather than doing work, the whole machine running mostly on its own.

We’re not building that.

We’re not building that.

Not because we can’t, and not because we’re being cautious. Because we’ve learned — sometimes the hard way — that full autonomy is the wrong goal. The right goal is putting human judgment exactly where it’s needed, and removing everything around it that was wasting that judgment in the first place.

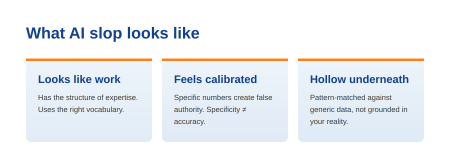

What AI Slop Actually Looks Like

“AI Slop” gets thrown around a lot. In our world — professional services, Salesforce implementations, detailed technical delivery — it has a specific shape.

It’s not obviously wrong output. Obvious errors are easy to catch. AI Slop is confidently wrong output.

- It looks like work;

- It has the structure of expertise;

- It uses the right vocabulary…

- And it’s hollow underneath.

The Estimate that Looked Right: A Real Example from Our Own Build

Early in the process, someone on our team used an AI tool to estimate the data migration component of a project. The output came back detailed and specific: 13 hours for this task, 6 days for that phase. It had the visual authority of a real estimate; the kind of numbers that end up in a proposal, get approved, and become a commitment.

The problem:

- The AI had no idea what data migration tooling we use.

- It didn’t know our methodology.

- It had never seen how we actually run a migration.

It pattern-matched against generic project data and produced numbers that felt calibrated because they were specific, not because they were grounded in anything real.

It pattern-matched against generic project data and produced numbers that felt calibrated because they were specific, not because they were grounded in anything real.

The person who generated the estimate didn’t catch it. Not because they weren’t capable, but because the output looked like it knew what it was doing. That’s the trap. The more specific the numbers, the more trustworthy they feel. Specificity is not accuracy.

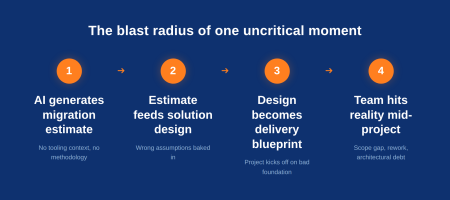

And here’s where it gets worse. That estimate doesn’t stay in a spreadsheet; it feeds the solution design. A solution architect working from a flawed estimate scopes the migration approach around the wrong assumptions: wrong tooling selected, wrong complexity accounted for, wrong sequencing planned. The solution design becomes the delivery blueprint. The project kicks off, and nobody flags it, because the numbers look thorough. The team hits the migration phase and discovers the actual effort is nothing like what was scoped. Now you’re mid-project with a scope gap, a client who signed off on a number that was never real, and a technical approach that has to be unwound and rebuilt. The debt isn’t just financial — it’s architectural. Decisions made downstream leave residue in the system long after the project closes.

One uncritical moment with an AI tool. One confident-looking output accepted without interrogation. That’s the blast radius.

The Real Problem Isn’t the AI

The agent did exactly what it was asked to do. It generated an estimate. Nobody told it what tools we use. Nobody gave it our historical delivery data. Nobody asked it to flag its own uncertainty. It filled in the gaps with plausible-sounding answers, because that’s what language models do when they don’t have the context they need.

The agent did exactly what it was asked to do. It generated an estimate. Nobody told it what tools we use. Nobody gave it our historical delivery data. Nobody asked it to flag its own uncertainty. It filled in the gaps with plausible-sounding answers, because that’s what language models do when they don’t have the context they need.

The failure wasn’t the AI; it was the human-AI relationship.

Getting good output from an AI agent requires two skills

And most people are still developing them:

- Knowing how to ask.

Not “generate an estimate for data migration” but “given that we use [specific tooling], following [specific methodology], on projects of [specific scope], generate a time estimate and flag any assumptions you’ve had to make.” The brief quality determines the output quality. Always.

- Knowing how to evaluate.

This is harder, and it’s where domain expertise becomes non-negotiable. If you don’t know enough about data migration to sanity-check a 13-hour estimate, you can’t be the one reviewing AI-generated migration estimates. The human in the loop has to be the right human: someone with enough context to know when the output smells wrong.

Both of these are learnable skills. Neither of them comes automatically from having access to an AI tool.

The Deck Problem

The data migration story is about bad input leading to bad output. There’s a related failure mode that’s more subtle, and we see it constantly.

Someone needs to build a presentation. They open their AI tool of choice and type: “Create me a deck that tells the story of our Q2 results to the leadership team.”

The AI produces a deck. It has slides. It has a narrative arc of sorts. It looks like a presentation.

But the person skipped the hard part: figuring out what the story actually is.

- What’s the key insight from Q2?

- What does leadership need to feel, not just know?

- What’s the one thing you want them to walk away with?

- What’s the tension you’re resolving?

AI is an extraordinary executor of a clear brief. It is a poor substitute for having the brief in the first place. When you outsource the thinking along with the production, you get output that is structured but hollow — a deck that covers the material without making a point.

The right sequence is: work out the narrative first, even if that’s just a ten-minute conversation with yourself or a quick back-and-forth with the AI to pressure-test your thinking. Then build the deck. Used in that order, AI genuinely accelerates the work. Used in the wrong order, it produces the professional services equivalent of a Wikipedia summary — accurate, organized, and forgettable.

How We Design for Human-AI Collaboration

Knowing all of this, here’s how we’ve thought about human-in-the-loop design across our own agent stack.

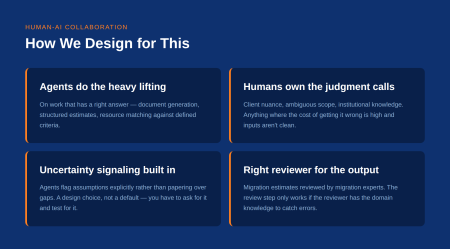

- Agents do the heavy lifting on work that has a right answer.

- Document generation

- Structured estimates based on known inputs

- Agenda creation from a defined brief

- Resource matching against defined criteria

These are tasks where the shape of a good output is knowable in advance, the inputs can be specified precisely, and a reviewer can evaluate correctness quickly.

- Humans own anything that requires judgment about context we haven’t fully codified.

- Client relationship nuance

- Scope calls where the risk is ambiguous

- Decisions that depend on institutional knowledge that hasn’t made it into the knowledge base yet

These don’t go through an agent alone. The agent could generate something, but the cost of getting it wrong is high and the inputs aren’t clean enough to trust the output.

- We build uncertainty signaling into our agents.

When an agent is working with incomplete information or making an assumption, we want it to say so explicitly rather than paper over the gap with confident-sounding output. This is a design choice, not a default behaviour. You have to ask for it, and you have to test for it. An agent that doesn’t know how to flag its own uncertainty is a liability waiting to materialize.

- We match the reviewer to the output.

An AI-generated migration estimate gets reviewed by someone who actually knows our migration methodology. A scoping document gets reviewed by a delivery lead who’s run that kind of project. The review step only works if the reviewer has the domain knowledge to catch what the AI got wrong. More than just a checkbox, human-in-the-loop is a quality gate, and the gate is only as good as the person standing at it.

Why Consulting Has Never Been More Valuable

There’s a narrative in the market that AI is commoditizing consulting. We think it’s exactly backwards.

AI has made the execution of technology work faster and cheaper. What it can’t do well is make the judgment calls that determine whether you’re executing the right thing.

There are always twelve ways to accomplish something with technology. Which one is right for your organization depends on things no model can fully assess:

- the nuance of your business

- the skills and appetite of your people

- the political reality of your leadership team

- the change fatigue from the last three initiatives

- the difference between what’s technically optimal and what will get adopted

Those are human judgments. They require experience, pattern recognition, and the kind of contextual understanding that only comes from having done this in many organizations across many industries.

What AI does is collapse the distance between a good decision and a delivered outcome. That concentrates consulting value at the decision point, which means the quality of the thinking at the front end matters more than ever. A wrong architectural decision doesn’t cost you weeks of manual work to discover anymore. It costs you weeks of AI-accelerated build heading in the wrong direction.

Good consultants have always been paid to know which of the twelve options is right for you. AI just made that judgment more consequential, and more visible, than it’s ever been.

The Skill Nobody Talks About

Here’s a question worth sitting with: if you got to work alongside Stephen Hawking or Marie Curie, would you learn from them or would you just hand them your work?

Most people, given access to one of the greatest minds in history, would do the latter. They’d offload the task, take the output, and move on. And they’d be wasting the opportunity entirely.

AI is that genius. And most people are just handing it their work.

Building the Muscle: Strong Users Do It Differently

The firms and individuals who are pulling ahead aren’t the ones with the most AI tools or the biggest licenses. They’re the ones who treat AI as a collaborator to think alongside, and who use it to pressure-test their reasoning, stress-test their assumptions, and sharpen their own thinking before they ask it to produce anything. The output gets better because the person got better.

Every conversation about AI in professional services eventually lands on: what work will AI replace? It’s the wrong question, or at least it’s incomplete.

The more important question is: what does good human-AI collaboration actually look like, and how do you build that skill across a team?

The firms that will struggle aren’t the ones that adopt AI too slowly. They’re the ones that adopt AI without developing the judgment to use it well — who hand off the thinking along with the production, who accept confident-looking output without the domain knowledge to interrogate it, and who mistake the presence of an AI tool for having an AI strategy.

We’re still building this muscle ourselves. The data migration story happened to us. The deck problem is something we catch each other on regularly. The difference is that we’re paying attention to the failure modes, naming them when they occur, and designing our systems to make them less likely.

That’s what human-in-the-loop actually means in practice: a genuine understanding of where AI judgment ends and human judgment has to begin… and the discipline to hold that line even when the AI output looks convincing.

If you’re planning a Salesforce implementation — or simply exploring how to turn your platform into a more intelligent, AI-driven system — our Delivery Success team is always available for a conversation. We’re happy to review your current org, discuss opportunities for improvement, and help identify the best place to start.

Read more in our Agentic Enterprise Series

- Part 1: Diabsolut: The Accidental Agentic Enterprise

- Part 2: Agents Don’t Run the Show — People Do

- Part 3: Zero to Live in a Week: What That Requires

- Part 4: Building on Salesforce’s Boring Foundation (We Mean That as a Compliment)

- Part 5: Why We Don’t Build Everything Ourselves

Search

Trending Topics

- Why We Don’t Build Everything Ourselves

- Building on Salesforce’s Boring Foundation (We Mean That as a Compliment)

- Zero to Live in a Week: What That Actually Requires

- Agents Don’t Run the Show — People Do

- Getting Leadership Buy-In for AI (Without Pitching “AI”)

- Diabsolut: The Accidental Agentic Enterprise

- Diabsolut Named Certinia Partner of the Year 2025

- Agentforce Implementation Starts With Data Readiness

- Maturity Models: A Guide for Scalable Implementation

- 5 Benefits of Predictive Analytics in Field Service You Can’t Ignore